|

7/31/2023 0 Comments Spark sql ilike

Val simple_like = udf((s: String, p: String) => s. If for some reason Hive context is not an option you can use custom udf: import .functions.udf rlike () Syntax Following is a syntax of rlike () function, It takes a literal regex expression string as a parameter and returns a boolean column based on a regex match. on Add ilike and flike filter operators 3552 mattdowle completed in 3552 on MichaelChirico mentioned this issue on include PostgreSQL's ilike into data.table 2519 Closed KyleHaynes mentioned this issue on Scope for plike 3702 Closed Sign up for free to join this conversation on GitHub. regex: A STRING expression with a matching pattern. In this article: Syntax Arguments Returns Examples Related functions Syntax Copy str NOT rlike regex Arguments str: A STRING expression to be matched.

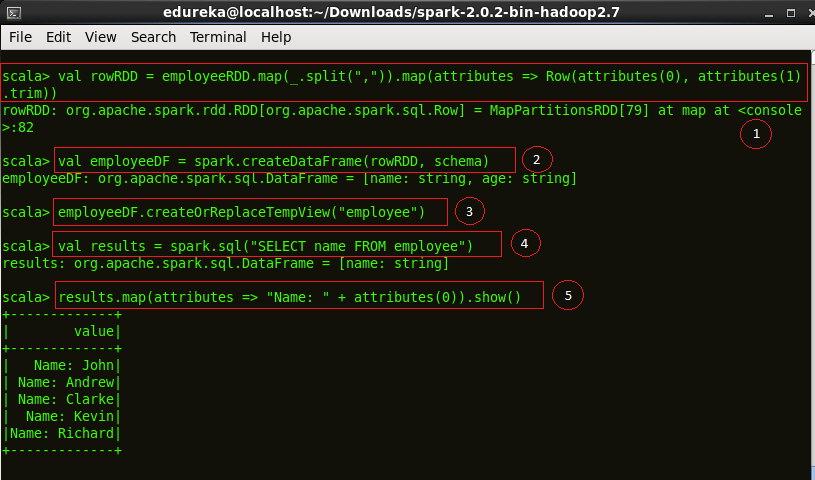

Usage would be like when (condition).otherwise (default). rlike operator NovemApplies to: Databricks SQL Databricks Runtime 10.0 Returns true if str matches regex. It is similar to regexplike () function of SQL. PySpark when () is SQL function, in order to use this first you should import and this returns a Column type, otherwise () is a function of Column, when otherwise () not used and none of the conditions met it assigns None (Null) value. In Spark 1.5 it will require HiveContext. It can be used on Spark SQL Query expression as well. Or expr / selectExpr: df.selectExpr("a like CONCAT('%', b, '%')") SqlContext.sql("SELECT * FROM df WHERE a LIKE CONCAT('%', b, '%')") If you want case-insensitive, try rlike or convert the column to upper/lower case. Most of the RDBMSs are case sensitive by default for string comparison. github/workflows/buildandtest.yml at b74a1ba Licensed to the Apache Software Foundation (ASF) under one or more contributor license agreements. 1 Answer Sorted by: 11 Yes, Spark is case sensitive.

Val df = Seq(("foo", "bar"), ("foobar", "foo"), ("foobar", "bar")).toDF("a", "b") SPARK-36674 SQL Support ILIKE - case insensitive LIKE Build and test 15373 Sign in to view logs Workflow file for this run. Still you can use raw SQL: import .hive.HiveContext val sqlContext new HiveContext (sc) // Make sure you use HiveContext import sqlContext.implicits. Import sqlContext.implicits._ // Optional, just to be able to use toDF provides like method but as for now (Spark 1.6.0 / 2.0.0) it works only with string literals. Val sqlContext = new HiveContext(sc) // Make sure you use HiveContext Still you can use raw SQL: import .hive.HiveContext In conclusion, Spark & PySpark support SQL LIKE operator by using like() function of a Column class, this function is used to match a string value with single or multiple character by using _ and % provides like method but as for now (Spark 1.6.0 / 2.0.0) it works only with string literals. Returns a boolean Column based on a case insensitive match. Similarly, you can also try other examples explained in above sections. Using a sample pyspark Dataframe ILIKE (from 3.3.0) SQL ILIKE expression (case insensitive LIKE). Specifies a regular expression search pattern to be searched by the RLIKE or REGEXP clause. SQL language reference Functions Built-in functions Alphabetical list of built-in functions nvl function nvl function NovemApplies to: Databricks SQL Databricks Runtime Returns expr2 if expr1 is NULL, or expr1 otherwise. It can contain special pattern-matching characters: matches zero or more characters.

(3,"Robert Williams"), (4,"Rames Rose"),(5,"Rames rose")]ĭf = spark.createDataFrame(data=data,schema=)ĭf.filter(col("name").like("%rose%")).show() Examples: > SELECT true false > SELECT false true > SELECT NULL NULL Since: 1.0.0 expr1 expr2 - Returns true if expr1 is not equal to expr2, or false otherwise. Specifies a string pattern to be searched by the LIKE clause. Below is a complete example of using the PySpark SQL like() function on DataFrame columns, you can use the SQL LIKE operator in the PySpark SQL expression, to filter the rows e.t.c

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed